If you haven’t used Photoshop lately, you’re missing a prime example of how AI is really going to change the world. With new features like Generative Fill and Generative Expand, they are showing that the AI revolution will not be a big event, but a creeping, steady inclusion into our daily lives. So I decided to put it to the ultimate test.

TRANSCRIPT:

Did you know that you can use generative AI directly in photoshop?

If you use photoshop on a regular basis, this is not news to you. If you don’t use photoshop on a regular basis, you might not know about this but also you might not care? Because you don’t use Photoshop.

Either way stick around because this isn’t just a tutorial, there’s a bigger point to all this.

But in the 2024 update to Photoshop, they included several generative AI tools like generative fill, where you just select a part of your photo, tell it what you want to put there and it just… puts it there.

And generative expand, that lets you expand out from your subject and fill in what’s around them.

It’s not perfect, and sometimes it makes weird choices, but it’s kinda like how ChatGPT works by just predicting what the next word should be, this is just predicting what it thinks should be there based off what it sees on the edges of the image.

And I got curious like, what if you did that generative expand over and over again, like what if you did it 100 times…

how many times could you do it before it starts to get weird?

The answer… Not very many.

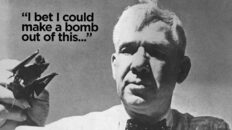

So I took a shot of myself, which you can see here, and I expanded it. And I decided to use the grid that the crop tool creates and made it so the size of the photo was the same as one of the cells created by the grid. That way all the expansions would be the same. I think it came out to 300%. Did the generative expand, and got this…

I realized pretty quickly that if I just kept expanding the image over and over, the size of the image was going to become unsustainable, so I reduced the image size back down to 4K resolution before expanding it again.

I should probably mention that I did all of this on a livestream that I made available for members so we got to hang out and chat while I did this. Hit the join button down below if you wanna be invited to my next weird idea. Anyway…

The expansions seemed to put me in a cave for some reason, or maybe something like a bomb shelter built into a cave. There may be a story behind this but within maybe 5 expansions, it took me out of the cave and we were off across and endless landscape.

So, part of this little experiment was that I wanted to just let it be random. Remember it gives you three choices and obviously whatever choice you choose will affect the next generation and so on and so on. So if I wanted to direct where it went, I could do so by picking a different choice, or for that matter, I could have entered a text prompt to change it however I wanted. But I chose to just let randomness do its thing… For a while.

Yeah, after about an hour and a half of this endless landscape that wasn’t really changing much, I decided to nudge it a little bit to see if I could make it go a different direction. And go in a different direction it did.

So how does this work?

Without going into a whole deep dive on generative AI, because it’s out of scope for this video and it’s been covered a million times before, the first of these programs to make some waves was DALL-E, from Open AI, which was only first revealed in January of 2021.

Yeah, believe it or not, this is still just three years old.

DALL-E was a revelation because it combined natural language processing with image generation, making it possible to enter a text prompt and get a fully realized image out of it.

It wasn’t perfect, especially with hands, it was notoriously bad with those at first, but it was a proof of concept. Also the original DALL-E wasn’t really available to the public, it was more of a research project.

DALL-E 2 made significant improvements to the original and it was available to the public in April of 2022, followed soon after by Midjourney in July of that year and Stable Diffusion one month later in August.

Yeah, 2022 was when all of this really started happening.

But one of the big problems with these image generators is that they were trained on basically all the images on the internet, meaning artists, photographers, and illustrators were all having their copyrighted work used without their permission. Which is still going on.

And to add insult to injury, these same artists and photographers also had to face the possibility of losing work because people can now just make whatever images they want instead of paying an artist to do it for them. But even more so, they stood to lose revenue they normally made by licensing their work to stock image libraries.

Stock image sites have been a whole industry for decades now, used by designers and agencies to mock up campaigns and websites and they were one of the first industries on the chopping block once generative AI art became a thing.

And one of the largest stock image libraries in the world was owned by Adobe itself. They stood to lose millions, maybe billions of dollars as people turned to these generative AI models. So in March of last year, Adobe introduced Firefly.

Firefly is Adobe’s generative AI model, and it works just like DALL-E and Midjourney, except Firefly is trained only using the images in Adobe’s stock catalog.

Meaning Adobe already had licensing rights to all of the images, so it feels a bit more ethical, because at least the artists got paid something for their work being used in training data.

Now I tried Firefly… Honestly I was kinda underwhelmed by it compared to say, Midjourney, who I still think has the best image quality.

And apparently I’m not alone in that opinion because Firefly has never really taken off as a stand-alone image generator but, in 2024, Adobe incorporated Firefly into Photoshop. So these generative expand and generative fill tools – that’s Firefly.

And as a tool inside of Photoshop it’s been a game changer, and made possible all the things I’m talking about in this video.

Turns out even using the Z-space in After Effects wasn’t as easy as I was hoping for, there was a lot of positioning the images to line up just right so instead of just getting one clip right and then copying and pasting to all the others, he had to painstakingly take each shot, line them up, adjust their timing and do that one after another 100 times. I owe him a few beers for this.

And even then, he struggled to make the transitions as smooth as I was hoping for, it turns out that the expansions themselves created a bit of a warp on the outside of the images that prevented it from lining up perfectly. So we still get a little bit of a stutter effect, but in the end, we came out of it with this…

Overall I’m pretty happy with how this came out – would like it to be smoother

Maybe if I made each expansion a bit smaller, it would be smoother. If anybody out there has a better idea, I’d be glad to hear it.

But this was just an experiment – I would be interested in repeating it to see if it goes in different directions every time you do it.

I did test it out a little bit before I did the full live stream and it went in a way different direction, way more abstract

Of course this took hours of my time and Marc’s time so I’m not sure I want to do it all again, although…

I was told about an app that apparently can do an infinite zoom in a matter of minutes, I thought I’d check it out.

Make a point about how all of these AI tools are meant to save time, and that this is how AI is going to enter our lives – not necessarily as a technological singularity but as a slow creep of integration into programs and processes we already use.

The dangers though – in just a couple years the majority of images in Adobe stock are AI-generated image. Kinda makes me wonder if it’s just a model training itself on itself

The great AI ouroboros, if you will.

Add comment